It's been a fun week of blogging about the Leap Motion controller, but now it's finally time to Leap to a conclusion (groan).

We started the week by introducing the device and we then used it to navigate models, interact with AutoCAD commands and draw 3D geometry. Today's post is an attempt to summarise the impressions I've formed from working with the Leap Motion controller, and take a shot at predicting (no doubt inaccurately) where it's going to make most impact.

Let me start by saying that the Leap Motion controller does what it says on the tin. It's extremely responsive – even when used in "low power" mode in a virtualized OS environment – and highly accurate. I'd say it really does have the potential to revolutionise how we interact with computers on a casual basis or in a shared environment.

My feeling is that in the design space the controller will work really well for collaborative, virtual walkthroughs – I made a similar case for Kinect, for instance – and for applications that require more art than precision (sculpting apps such as Mudbox spring to mind).

There's a time and a place for any technology, though: one of the drawbacks I've found from using the device at my desktop over an extended period is that it tires your hands and arms very quickly (I'd hate to be a demo jock for Leap Motion, although on the positive side I'd probably end up with forearms like Popeye ;-). In fact, after half a day I even started to feel twinges of carpal tunnel coming on (oh, the irony).

Part of the issue I've found stems from the distance you need to maintain from the device for your hands and fingers to be detected: they really have to hover some distance from it. So you can't really lean your wrist on some kind of support and make subtle finger movements. And the lack of tactile feedback doesn't help, either: it's surprising how much difference this makes when attempting to control objects in a virtual space. All of which means it's fine for casual use but very different if you're modeling for 30-50 hours a week.

Don't get me wrong, though: I think this is a very interesting piece of technology that we'll hear a great deal about during the course of the next few years (we're starting to see announcements around funding, technology partnerships and their planned retail channel, for instance). Leap Motion has managed to get a lot of people very excited about their technology – especially developers, which will clearly be key to the product's success.

Beyond that, Leap Motion and other gesture control systems will continue their march through the Gartner hype cycle: we're apparently still 2-5 years away from the technology's "plateau of productivity" which doesn't strike me as very long, on balance.

I expect to see some interesting hybrid environments emerge – where you use the Leap Motion controller alongside your existing mouse & keyboard – as well as some specific cases where the device can streamline an existing, more complex input process.

From my side, I'd still like to pursue some additional experiments such as implementing the ability to "pick up" and manipulate AutoCAD objects in 3D space (which would be pretty tricky to get working well, I think). I'll certainly continue to report back to readers of this blog as I continue my investigations.

In the meantime – what do you think? How do you foresee the technology being used successfully, whether in the design industry or elsewhere?

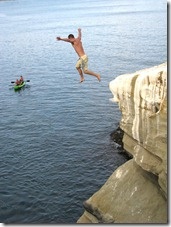

photo credit: Better Than Bacon via photopin cc

Leave a Reply to Kean Walmsley Cancel reply