After discovering, earlier in the week, that version 1.5 of the Kinect SDK provides the capability to get a 3D mesh of a tracked face, I couldn't resist seeing how to bring that into AutoCAD (both inside a jig and as database-resident geometry).

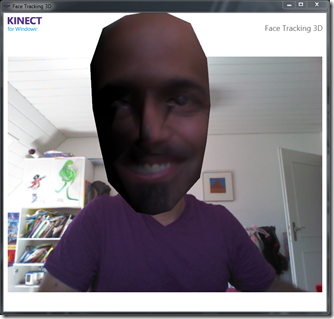

I started by checking out the pretty-cool FaceTracking3D sample, which gives you a feel for how Kinect tracks faces, super-imposing an exaggerated, textured mesh on top of your usual face in a WPF dialog:

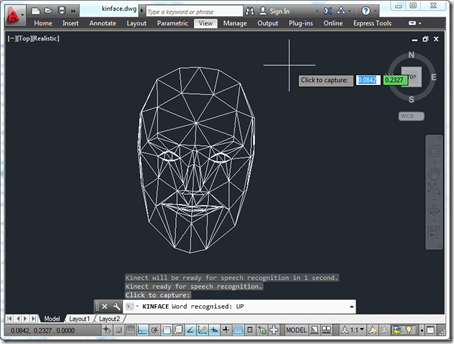

I decided to go for a more minimalist (which also happens to mean it's simpler and with less scary results 🙂 approach for the AutoCAD integration: just to generate a wireframe view during a jig by drawing a series of polygons…

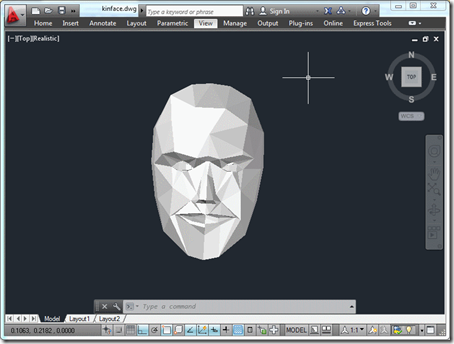

… and then create some database-resident 3D faces once we're done:

Here's the main C# code that does all this (although a few minor changes were needed in the base KinectJig class, to make certain members accessible – you can get the complete source here):

using Autodesk.AutoCAD.ApplicationServices;

using Autodesk.AutoCAD.EditorInput;

using Autodesk.AutoCAD.Geometry;

using Autodesk.AutoCAD.Runtime;

using Autodesk.AutoCAD.GraphicsInterface;

using Autodesk.AutoCAD.DatabaseServices;

using Microsoft.Kinect;

using Microsoft.Kinect.Toolkit.FaceTracking;

using System.Collections.Generic;

using System.Linq;

#pragma warning disable 1591

namespace KinectSamples

{

// A simple, lightweight face definition class to be used

// inside (and passed outside) the jig

public class FaceDef

{

public FaceDef(Point3d first, Point3d second, Point3d third)

{

First = first;

Second = second;

Third = third;

}

public Point3d First { get; set; }

public Point3d Second { get; set; }

public Point3d Third { get; set; }

}

public class KinectFaceMeshJig : KinectJig

{

private FaceTracker _faceTracker;

private bool _displayFaceMesh;

private int _trackingId = -1;

private FaceTriangle[] _triangleIndices;

private Point3dCollection _points;

private List<FaceDef> _faces;

public List<FaceDef> Faces { get { return _faces; } }

public KinectFaceMeshJig()

{

_displayFaceMesh = false;

}

public void UpdateMesh(FaceTrackFrame frame)

{

EnumIndexableCollection<FeaturePoint, Vector3DF> pts =

frame.Get3DShape();

if (_triangleIndices == null)

{

// The triangles definitions don't change from

// frame to frame

_triangleIndices = frame.GetTriangles();

// We'll also create a dummy set of points

// (as this also has a fixed size)

_points = new Point3dCollection();

for (int idx = 0; idx < pts.Count; idx++)

{

_points.Add(new Point3d());

}

}

// Create or clear the list of face definitions

if (_faces == null)

{

_faces = new List<FaceDef>();

}

else

{

_faces.Clear();

}

// Update the 3D model's vertices

for (int idx = 0; idx < pts.Count; idx++)

{

Vector3DF point = pts[idx];

_points[idx] = new Point3d(point.X, point.Y, -point.Z);

}

// Create a face definition for each triangle

foreach (FaceTriangle triangle in _triangleIndices)

{

FaceDef face =

new FaceDef(

_points[triangle.First],

_points[triangle.Second],

_points[triangle.Third]

);

_faces.Add(face);

}

}

protected override SamplerStatus SamplerData()

{

try

{

if (_faceTracker == null)

{

try

{

_faceTracker = new FaceTracker(_kinect);

}

catch (System.InvalidOperationException)

{

// During some shutdown scenarios the FaceTracker

// is unable to be instantiated. Catch that exception

// and don't track a face

_faceTracker = null;

}

}

if (

_faceTracker != null &&

_colorPixels != null &&

_depthPixels != null &&

_skeletons != null

)

{

// Find a skeleton to track. First see if our old one

// is good. When a skeleton is in PositionOnly tracking

// state, don't pick a new one as it may become fully

// tracked again

Skeleton skeletonOfInterest =

_skeletons.FirstOrDefault(

skeleton =>

skeleton.TrackingId == _trackingId &&

skeleton.TrackingState !=

SkeletonTrackingState.NotTracked

);

if (skeletonOfInterest == null)

{

// The old one wasn't around. Find any skeleton that

// is being tracked and use it

skeletonOfInterest =

_skeletons.FirstOrDefault(

skeleton =>

skeleton.TrackingState ==

SkeletonTrackingState.Tracked

);

}

// Call a different version of the function if we have

// a tracked skeleton. We're also lazily hard-coding the

// depth and image formats, for now (sorry!)

FaceTrackFrame faceTrackFrame =

(skeletonOfInterest == null ?

_faceTracker.Track(

ColorImageFormat.RgbResolution640x480Fps30,

_colorPixels,

DepthImageFormat.Resolution640x480Fps30,

_depthPixels

)

:

_faceTracker.Track(

ColorImageFormat.RgbResolution640x480Fps30,

_colorPixels,

DepthImageFormat.Resolution640x480Fps30,

_depthPixels,

skeletonOfInterest

)

);

if (faceTrackFrame.TrackSuccessful)

{

this.UpdateMesh(faceTrackFrame);

// Only display face mesh if there was a successful track

_displayFaceMesh = true;

}

}

}

catch (System.Exception ex)

{

Autodesk.AutoCAD.ApplicationServices.Application.

DocumentManager.MdiActiveDocument.Editor.WriteMessage(

"\nException: {0}", ex

);

}

ForceMessage();

return SamplerStatus.OK;

}

protected override bool WorldDrawData(WorldDraw draw)

{

if (_displayFaceMesh)

{

Point3dCollection pts = new Point3dCollection();

pts.Add(new Point3d());

pts.Add(new Point3d());

pts.Add(new Point3d());

foreach (FaceDef face in _faces)

{

pts[0] = face.First;

pts[1] = face.Second;

pts[2] = face.Third;

draw.Geometry.Polygon(pts);

}

}

return true;

}

}

public class KinectFaceCommands

{

[CommandMethod("ADNPLUGINS", "KINFACE", CommandFlags.Modal)]

public void ImportFaceFromKinect()

{

Document doc =

Autodesk.AutoCAD.ApplicationServices.

Application.DocumentManager.MdiActiveDocument;

Editor ed = doc.Editor;

KinectFaceMeshJig kj = new KinectFaceMeshJig();

kj.InitializeSpeech();

if (!kj.StartSensor())

{

ed.WriteMessage(

"\nUnable to start Kinect sensor - " +

"are you sure it's plugged in?"

);

return;

}

PromptResult pr = ed.Drag(kj);

if (pr.Status != PromptStatus.OK && !kj.Finished)

{

kj.StopSensor();

return;

}

kj.StopSensor();

if (kj.Faces != null && kj.Faces.Count > 0)

{

Transaction tr =

doc.TransactionManager.StartTransaction();

using (tr)

{

var bt =

(BlockTable)tr.GetObject(

doc.Database.BlockTableId, OpenMode.ForRead

);

var btr =

(BlockTableRecord)tr.GetObject(

bt[BlockTableRecord.ModelSpace], OpenMode.ForWritea

);

// Create a 3D face for each definition

foreach (var fd in kj.Faces)

{

Face face =

new Face(

fd.First, fd.Second, fd.Third,

false, false, false, false

);

btr.AppendEntity(face);

tr.AddNewlyCreatedDBObject(face, true);

}

tr.Commit();

}

}

}

}

}

And here's a quick video to give you a feel for how the tracking works (although it's smoother when not recording, I've found):

Leave a Reply to Kean Walmsley Cancel reply